A new German survey about referring doctors' views on the quality and comprehensiveness of radiology reports shows that the opinions and preferences tend to vary widely between different specialists. The findings were published in the European Journal of Radiology on 23 March by Dr. Philipp Reschke from Goethe University Hospital Frankfurt and colleagues.

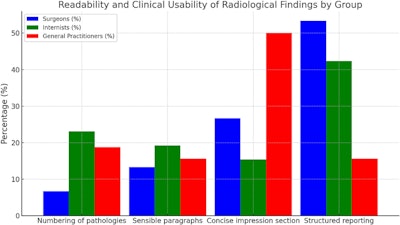

Surgeons strongly prefer concise reports over more detailed ones than either internists or general practitioners, while significantly more surgeons and internists prefer structured reporting to free-text reports than general practitioners, according to the researchers.

All figures courtesy of Dr. Philipp Reschke et al and the European Journal of Radiology.

All figures courtesy of Dr. Philipp Reschke et al and the European Journal of Radiology.

“The quality of radiology reports depends on how well the needs of referring physicians are met,” they wrote. “However, the lack of direct communication and feedback between referring physicians and radiologists often creates gaps in understanding the clinical utility of these reports. Referring physicians might hesitate to express confusion, leading radiologists to overestimate the quality of their reports.”

The study was conducted as a prospective, anonymous online survey available from June 2023 to March 2025. The study cohort comprised 258 practicing physicians in both rural and urban Germany who regularly request radiology services, including 93 internists, 90 surgeons, and 75 general practitioners. The participants were randomly selected across all hierarchical levels.

A pilot test involving 10 physicians was conducted to test and refine the survey; after revisions were made incorporating the pilot participants’ feedback, the survey was emailed to the study cohort. The question order was randomized to minimize order effects, and anonymity was maintained to reduce response bias. A reminder was sent after four weeks. The survey included rating scales, multiple-choice questions, and net promoter scores (NPS). Satisfaction was scored on a scale from −100 to +100.

Preference questions included report style (i.e., free-text or structured) and report length; satisfaction questions pertained to readability and usability, how often radiological findings fully answered the question for which the patient was being referred, and incomplete reports (asking the doctor for the reasons they were incomplete). In addition, the survey asked the physicians if they found multidisciplinary meetings to be helpful in clarifying radiology reports.

Overall, the average of referring physicians’ satisfaction with the completeness of radiology reports was 38.4 ± 24.3, with surgeons reporting the highest level of dissatisfaction.

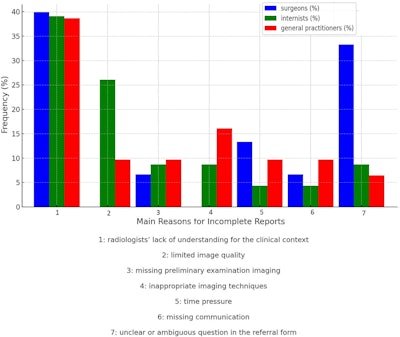

According to the surveyed referring physicians, the main reasons for incomplete reports included “radiologists’ lack of understanding for the clinical context” (36.1%); “missing preliminary examination imaging” (16%); “inappropriate imaging techniques” (10.3%); and “unclear or ambiguous question in the referral form” (12.6%). Surgeons reported “unclear or ambiguous questions in the referral form” as a main reason significantly more often than either internists or general practitioners, whereas internists reported “limited image quality” significantly more often than surgeons and general practitioners.

More of the study cohort reported having moderate difficulty finding medical information in the radiology report than the authors anticipated, with 63.2% having moderate difficulty, 32.5% having low difficulty, and 4.3% having high difficulty.

However, the percentage of all participants who found interdisciplinary case meetings helpful in understanding radiology reports was high; an overall total of 84.9% said that such conferences increased their understanding of reports.

The authors noted that some of the shortcomings the referring physicians mentioned would be hard to overcome without more extensive pre-imaging discussions between referring physician and radiologist: “More generally, limited pre-imaging consultation between referrers and radiologists, the exclusion of radiologists from assessing imaging appropriateness at the time of the imaging request, and the perception of imaging as a fait accompli on the day of the imaging examination contribute to incomplete reports in regards to all participants.”

Furthermore, they added, radiologists may lack sufficient background information to provide, or thoroughly understand, the proper clinical context in reports.

As for ambiguity, “the establishment of a definitive diagnosis in radiology reports provides referring physicians with clear guidance and certainty in clinical decision-making. This poses a challenge for radiologists, as imaging findings are often difficult to classify definitively,” the authors wrote.

Reschke and colleagues concluded that strengthening the aspect of interdisciplinary collaboration is essential to maintaining the quality and relevance of radiology reports. While the scheduling of these meetings can be costly, their clinical value outweighs financial considerations, the authors add. They suggest the use of targeted case discussions and structured meeting formats as a way to balance the cost and utility.

The researchers noted that there are several limitations to the study: It relies on self-reported data, and while they took steps to mitigate response bias, self-reporting can introduce the same. Offering predefined options may constrain responses; furthermore, the questions were not always context-based, which could have allowed for specific clinical scenarios or conditions. Additionally, the inclusion of other subspecialties could have offered information on whether or not structured reporting might offer particular advantages in particular types of reports. The authors suggested that future studies should include such subgroup analysis.

Read the full EJR study here.