Medical students aren't being taught enough about artificial intelligence (AI) technology, and radiologists need to actively consider how best to validate, approve, and integrate AI algorithms into their clinical practices, according to an editorial published online on 8 February in European Radiology.

In their editorial, Dr. Benoit Gallix, PhD, and Dr. Jaron Chong of McGill University in Montreal, Canada, noted that a recent study by researchers at the University of Cologne found that German medical students aren't afraid of AI and are optimistic that it will improve radiology and also medicine in general. However, only 30% of the respondents agreed that these advances make radiology more exciting for them. Gallix and Chong were particularly troubled by the overall low level of information medical students had received about AI; only half of the medical students were aware that AI is a hot topic in radiology, and less than one-third stated they had basic knowledge of the underlying AI technologies.

"Students also pointed out that their information came more from the media than from university teaching," they wrote. "Moreover, the students who were the most informed were also those who were the least afraid of this new technology. This indicates that there is a lot of room for teaching undergraduate students the fundamental principles of AI."

However, fresher thinking is needed about how AI will be practically used in radiology, according to the authors.

"The reality is that AI technology will transform the radiology profession in a way that deserves to be better understood and taught at medical school," they wrote. "It is necessary for radiologists to learn to use this new technology to improve the management of our patients. AI will most certainly replace some of the tasks that radiologists do today and help us to be more accurate in other tasks."

Unanswered questions

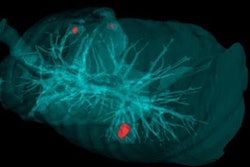

Multiple questions remain unanswered, such as the precise impact of AI on operations, insurance, funding, and business models, and how can radiologists improve the care provided to patients while preserving the long-term integrity of their profession. Furthermore, the authors asked, "How will we be able to rid ourselves of repetitive, boring, and thankless tasks -- such as the detection of metastases and their longitudinal follow-up -- to concentrate on actions requiring the integration of complex clinical and radiological data best suited for human intuition and insight?"

The rapidity of technological developments makes this change difficult to manage. Algorithms are rapidly improving, while healthcare systems are becoming more complex; this dynamic makes it extremely challenging to measure the technology's impact, Gallix and Chong added.

"This is a paradox that our colleagues who perform research in AI sometimes have difficulty understanding: Just because a technology seems to perform well in a particular population does not mean that its practical implementation in a healthcare system will have a positive impact on patient care," they stated.

A moral obligation?

Not everybody is convinced of the value and benefits of AI. Believing that the technology will destroy jobs and destabilize institutional balances that have taken years to establish, many believe that radiologists must protect their profession and personal data to limit the impact of AI, according to the authors.

"But the opposite argument could easily be made that, indeed, we have a moral obligation to use and promote AI tools that will improve the performance of radiologists and accelerate decision-making processes while limiting the cost of imaging examinations," they wrote. "It is our responsibility -- with the help of regulators -- to define a strong and rigorous assessment process that is required if we want these new tools to truly improve the quality of care."

They emphasized that it's not only a matter of showing how well AI performs. It's also critical to formulate precise hypotheses and define the populations for which these tools can be appropriately applied. What's more, strong tests are needed to demonstrate robustness in other populations and on other health systems than the ones the algorithms were developed for, and evaluations need to be performed on how the technology will change medical decision-making and patient management, according to the authors. Effectiveness on improving patient outcomes from a clinical point of view also needs to be documented, and AI performance needs to be monitored after regulatory approval -- as is commonly done in the pharmacovigilance process, they wrote.

"With these precautions in place, no one should be afraid of the impact of AI in medicine, including the impact on the profession of radiologists, which will be major, with no doubt," they concluded.